Fractal Fract, Free Full-Text

Por um escritor misterioso

Descrição

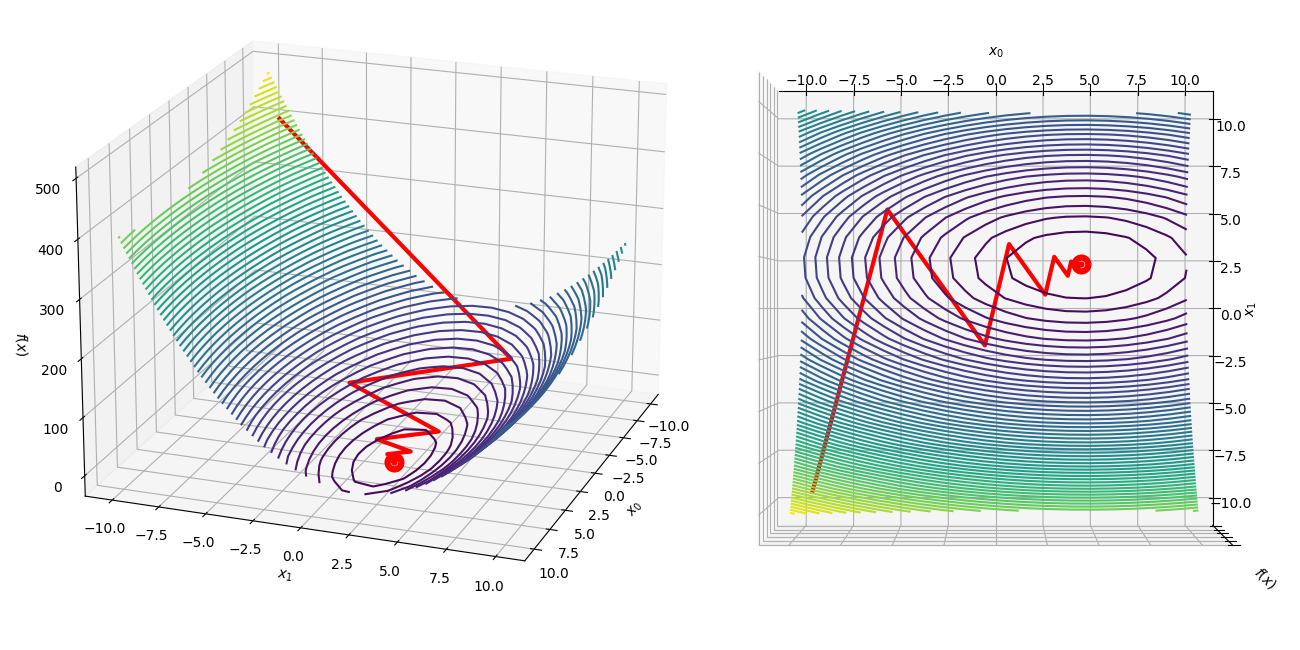

Stochastic gradient descent is the method of choice for solving large-scale optimization problems in machine learning. However, the question of how to effectively select the step-sizes in stochastic gradient descent methods is challenging, and can greatly influence the performance of stochastic gradient descent algorithms. In this paper, we propose a class of faster adaptive gradient descent methods, named AdaSGD, for solving both the convex and non-convex optimization problems. The novelty of this method is that it uses a new adaptive step size that depends on the expectation of the past stochastic gradient and its second moment, which makes it efficient and scalable for big data and high parameter dimensions. We show theoretically that the proposed AdaSGD algorithm has a convergence rate of O(1/T) in both convex and non-convex settings, where T is the maximum number of iterations. In addition, we extend the proposed AdaSGD to the case of momentum and obtain the same convergence rate for AdaSGD with momentum. To illustrate our theoretical results, several numerical experiments for solving problems arising in machine learning are made to verify the promise of the proposed method.

Branching out or inwards? The logic of fractals in Russian studies: Post-Soviet Affairs: Vol 39, No 1-2

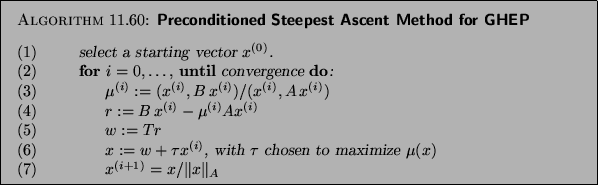

Fractal design concepts for stretchable electronics

Premium Photo Abstract fractal patterns and shapes infinite universemysterious psychedelic relaxation pattern dynamic flowing natural forms sacred geometrymystical spirals 3d render

Fractal Trading Strategy With Blaster Techniques 2023

Fractal Level 86 Snowblind on a Budget Full Deal

:max_bytes(150000):strip_icc()/dotdash_INV_final_Fractal_Indicator_Definition_and_Applications_Jan_2021-01-38e98076e9264b0c86b2051bdb76df27.jpg)

Fractal Indicator: Definition, What It Signals, and How To Trade

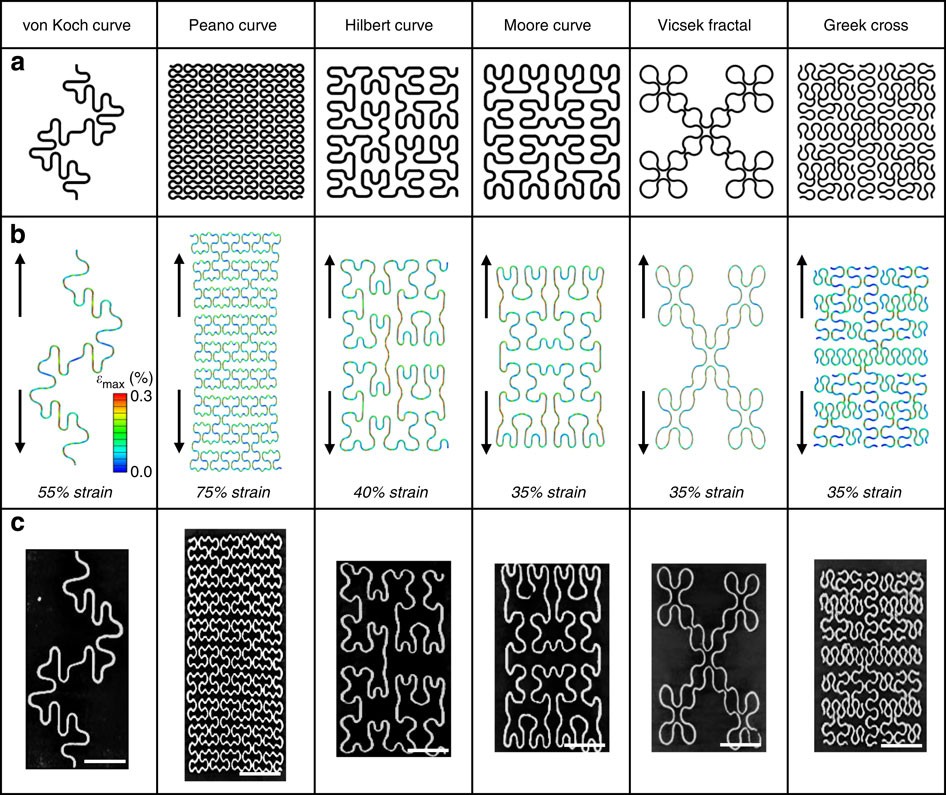

Frontiers Fractal Analysis of Lung Structure in Chronic Obstructive Pulmonary Disease

Fractals and Scaling in Finance: Discontinuity, Concentration, Risk. Selecta Volume E

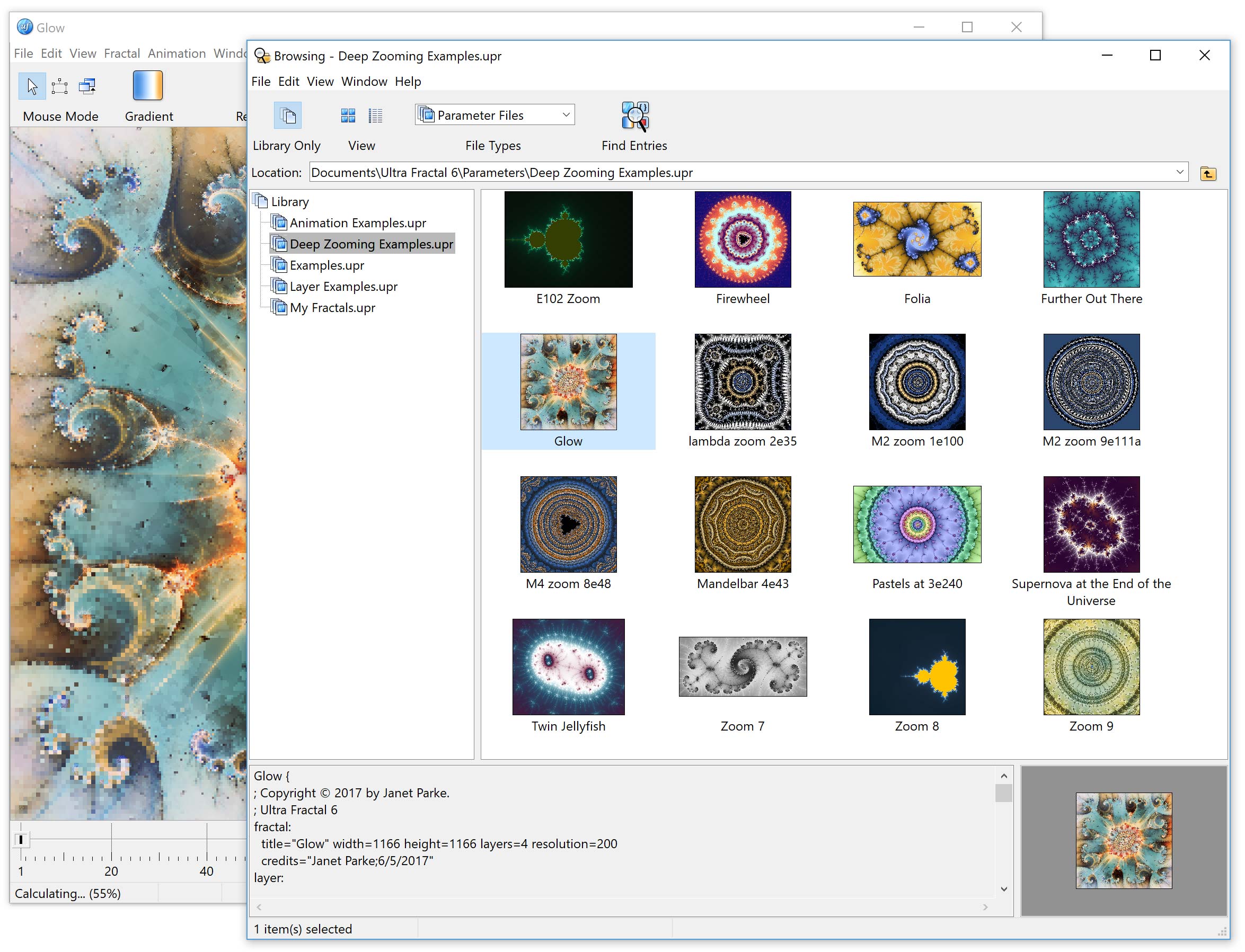

Ultra Fractal: Screen shots

de

por adulto (o preço varia de acordo com o tamanho do grupo)