A Coefficient of Agreement for Nominal Scales - Jacob Cohen, 1960

Por um escritor misterioso

Descrição

PDF] A coefficient of agreement as a measure of thematic classification accuracy.

Intriguing properties of generative classifiers – arXiv Vanity

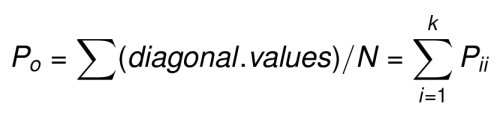

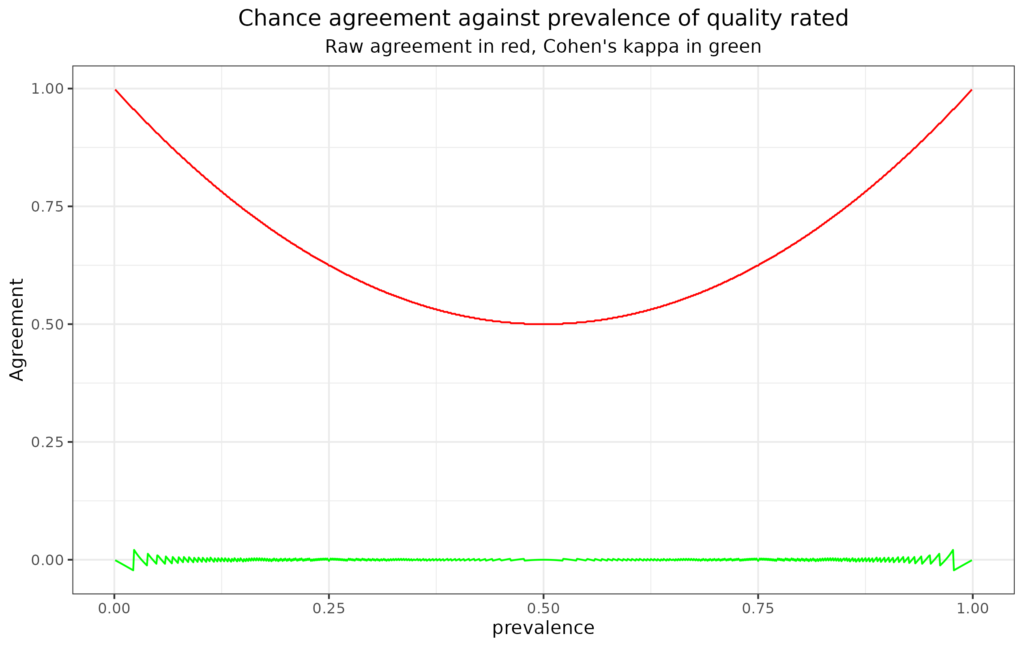

Why kappa? or How simple agreement rates are deceptive

European Journal of Psychological Assessment 2020 by Hogrefe - Issuu

The Equivalence of Weighted Kappa and the Intraclass Correlation Coefficient as Measures of Reliability - Joseph L. Fleiss, Jacob Cohen, 1973

Cohen's Kappa in R: Best Reference - Datanovia

Varname Varname If in Weight: Kappa - Interrater Agreement, PDF, Statistics

Toward Open-World Human-Robot Interaction: What Types of Gestures Are Used in Task-Based Open-World Referential Communication? - Zhao Han, Ph.D.

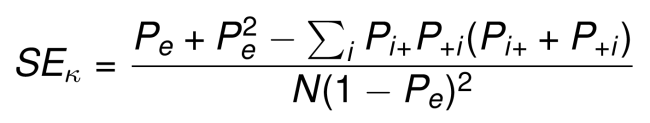

Cohen's kappa - Wikipedia

Weather on the Go: An Assessment of Smartphone Mobile Weather Application Use among College Students in: Bulletin of the American Meteorological Society Volume 99 Issue 11 (2018)

Cohen's Kappa in R: Best Reference - Datanovia

Why kappa? or How simple agreement rates are deceptive

Cohen's Kappa in R: Best Reference - Datanovia

Automatic sleep scoring using patient-specific ensemble models and knowledge distillation for ear-EEG data - ScienceDirect

Psychophysiological Methods - Paul Barrett

de

por adulto (o preço varia de acordo com o tamanho do grupo)